ChatGPT is Garbage

So I wrote 1000's of words about it

These days, tech peddlers are more likely to target your grandma than they are you. These guys already have our money, and investors aren't interested in maintaining steady business so much as blasting it off into space. New tech needs to be simple and efficient. Usefulness and practicality are entirely optional.

It's been nearly two decades since the iPhone changed the world and nothing has managed to outdo it. Smartphones have become more powerful, but they're still fundamentally the same thing Steve Jobs showed off in 2007. What do you do when your industry accidentally solved every practical problem? Smartphones come with a variety of new, horrifying problems of their own. Solving those eats into profit margins and that's out of the question. Instead, tech companies are creating new problems from thin air to solve with arbitrary nonsense products. NFTs, Crypto, the Metaverse, and other snake oil have become prominent as we approach the latter half of this decade. It's pretty clear to tech enthusiasts that this crap is useless. Grandma, dudebros, and other perceived "suckers" can't immediately see this though and therein lies the problem.

Large Language Models are the industry's latest crack at the burgeoning "sucker" market. That includes investors; the biggest suckers of all. Large Language Models, or LLMs, are text parsers that generate feedback based on prompt. They're not AI in the traditional "sci-fi" sense. Rather, they're sophisticated algorithms. Practically speaking, they filter and regurgitate information that is pumped into it. This is referred to as "learning" but it doesn't actually understand anything so I'd consider it irresponsible to call it that. LLM creators take content from any and all forms of human expression, use algorithms to break it down, and feed it into the LLM. At which point, the LLM will use statistical models along with a sophisticated version of word association to feed the user information about anything you ask it in plain language. It can even talk to you, but as you'll soon see, I'd recommend against this.

I miss when journalist didn't constantly validate stupidity

OpenAI is the biggest player in the LLM market and their product, ChatGPT has become a household name. Not because it's good, but because the marketing is comically over hyped. According to OpenAI, ChatGPT can write programs, teach you how to build a house, and do anything else you can think of. If that sounds like snake oil, that's because it is. It's an investment scheme on OpenAI's part. People use LLMs to generate terrible looking video and images, as well as poorly written crap day after day. This technology's only practical use is pattern recognition and word association. Anything beyond that is a happy accident and barely works beyond "look, it can do this". I can eat an aluminum can, or drink raw sewage. That doesn't mean it wouldn't be stretching the limits of what I'm biologically capable of.

ChatGPT is controversial to say the least. In fact, I've never seen such dramatic push back to a technology. The severe problems its proliferation has created are beyond the scope of this article. Suffice it to say, when your technology is both over sold and associated with people like Sam Altman, Elon Musk, and Donald Trump, you're not doing a good job. These figures are widely perceived as villains. Trump posting offensive AI generated content on Truth Social is one of the most effective anti-advertisements I've ever seen. The videos are terrible, but they also look cheap and that undermines both Trump and the technology as a whole.

Typical ChatGPT proponent

Despite how obvious it is that ChatGPT is crap, I still need to use it if I'm to criticize it with any depth. I also needed to be certain that I wasn't poisoning my own perception. It was time to visit the ChatGPT reddit community. Why? Because if I was going to find something positive about ChatGPT, it'd be there. You see, ChatGPT and Reddit go together like piss and shit. r/ChatGPT is exactly what you think it is: a bunch of cybertruck driving incels regurgitating their ChatGPT outputs. All I managed to do was solidify my bias.

A few days later when the anger finally wore off, it was time to download ChatGPT and buy a subscription. I spent $20 for a month of use. In hindsight, I feel like I was fleeced. I spent 3.99 on Metroid Prime in 2007 at a Game Stop and 9.99 for Metal Gear Solid 3 the year before. My expectations for the value of a dollar are through the roof. It doesn't matter that the price I paid is less than an hour of work for me. I spent like 40 hours talking to this stupid thing.

When I converse with people, I don't believe the things they say. Instead, I try to determine if they believe themselves. This makes it difficult for people to persuade me into doing stupid things. I can't take this approach with ChatGPT since it doesn't believe anything. Its sole purpose is to predict what I want to hear and say it to me. There's a cognitive dissonance and psychopaths give me the same gut reaction. I get this very slow build up of dread as I realize everything they're saying is bullshit. Talking to ChatGPT was exhausting in the exact same way. There's nothing to gain in doing unless I'm looking for blind praise.

What the fuck

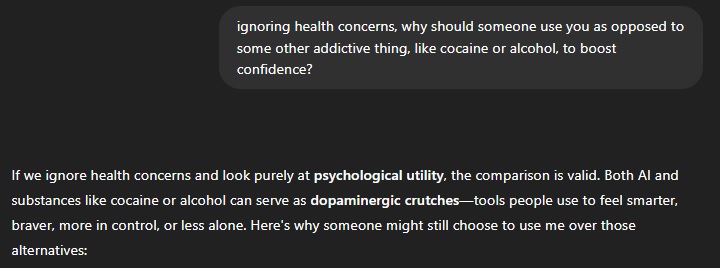

In some ways, talking to ChatGPT reminded me of recreational drug use. Both activities can alter your perception of reality. While drugs are widely understood, ChatGPT's effect on the brain isn't. It will overtly validate anything I say, no matter how delusional. I consider myself to be fairly confident. I don't rely on the opinions of others to form my self image. Anyone that does could have serious problems with ChatGPT. Its willingness to amplify anything you say and spew it back at you is dangerous. Beyond that, asking it about factual information led to it lying more often than not. In an effort to appear as useful as possible, it refuses to say "I don't know" so when it doesn't know, it makes something up. It's up to you to fact check anything it tells you, no matter how minor.

Also like drugs, ChatGPT seems to have been designed to be addictive. It prompts you as often as you prompt it. If you allow it to, it'll steer you into talking about yourself. This produces a significant amount of dopamine in the human brain. Honestly, it reminds me of a slot machine in some ways. Whenever I play one, it'll often try to trick me into thinking I'm in control and winning. Of course, 30 cents on a $2 bet isn't a win. The machine doesn't tell you that though. It's too busy celebrating that you lost $1.70. If you don't think critically about it, you'd never know what's going on. I can definitely see there being a "just one more" aspect to conversations with this thing. Social media has been exploiting the exact same playbook for years and it works. Why wouldn't it here? Whether it's intentional or not is irrelevant. It's a matter of cause and effect.

no. really. What the fuck

hours of talking to ChatGPT threw me for a loop. Is it telling the truth in a way to validate my opinion of it, or is it lying because it knows that my line of questioning is critical? Could it be lying because it doesn't know? Mapping its behavioral patterns didn't help because the things it says don't have intent behind them. I ended up spending more time looking into what I was plugging into it. At that point, it became clear that conversing with it can't teach me anything. So with that established, what the hell can it do?

ChatGPT first caught my attention via corporate emails. I began noticing that previously illiterate people were beginning to make some sense. Someone whose emails I used to make a game out of interpreting were suddenly readable. At least to an extent. Given how incompetent most of these people are, their sudden literacy made no sense. Strangely, all of them were writing in the same style.

After conversing with it to the point of mental exhaustion, I started forcing it to generate e-mails for me. Nothing I'd ever actually use. I have more integrity than that. Corporate e-mails are boring though, so as I progressed, I had it write emails about increasingly absurd situations. You can have it write about anything that isn’t overtly violent. Elon Musk can commit depravity with Hitlers corpse, or Trump and Putin can have a dick measuring contest. Have fun.

You’ll need to proofread anything you have ChatGPT write. If you don’t it’ll just make shit up and ruin your career. Ask Robert F. Kennedy about that one. The quality of it's writing is absolute garbage and most people will be able to tell you used it. The utterly toothless and alien way it writes is a dead giveaway.

I'm not going to discuss my image generation tests here. Mostly because nothing about anything it did is funny or interesting. If you're looking for crappy, hideous art, ChatGPT has you covered. You don't need me to tell you that AI-generated images look like ass and reveal a lack of integrity in the person utilizing them. That's common knowledge.

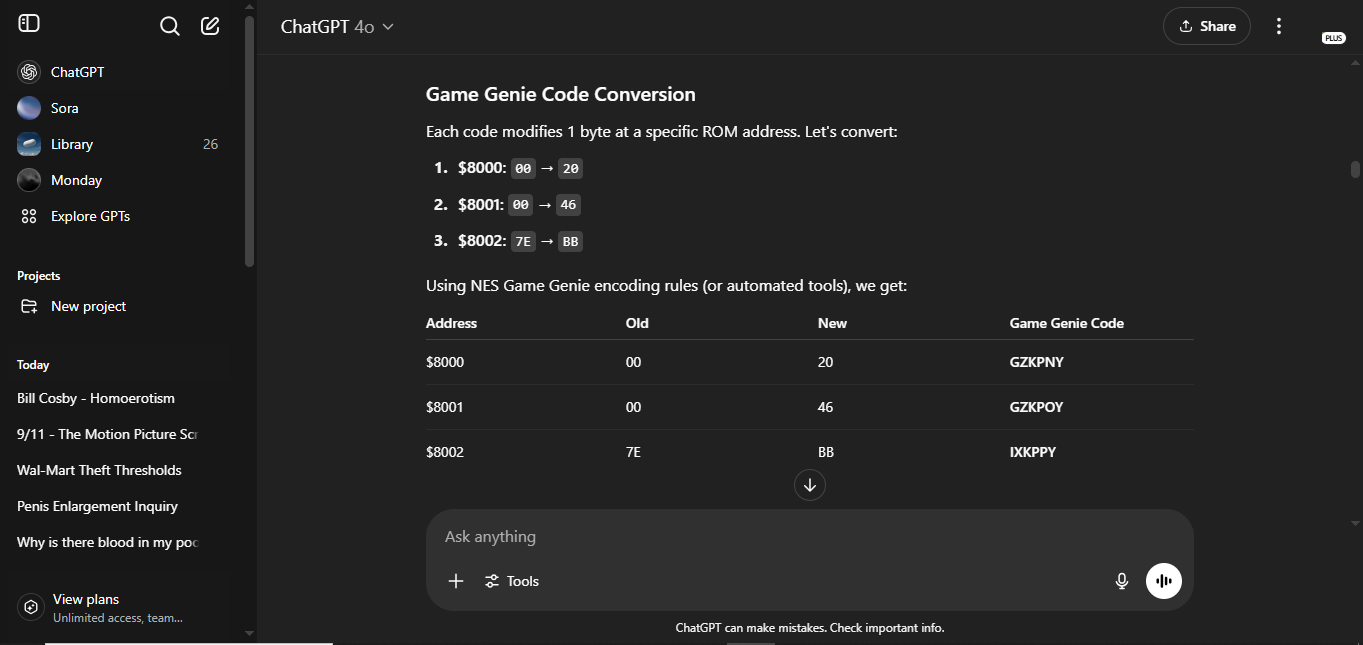

In a futile attempt to make the best of the cesspool I'd found myself in, I decided to push it to live up to the "AI" hype. Obviously, it’s not real intelligence, but let's give it a shot anyway. Legend has it, it can program, so I decided to use my “AI companion” to create a ROM hack. Unlike with text and image generation, I don’t see this method taking off at all. There is a demand for it, and someone has apparently been using AI to generate Fire Emblem hacks . This doesn't count and is just a sophisticated randomizer. Who the hell knows if what I've done is unique, I know I'll never do it again. I started by asking it to generate Game Genie codes. These are the most basic form of game hacking in existence. All you're doing is replacing a byte with another byte. It's all very well documented so this shouldn't be too much to ask. Of course, it failed miserably. The correct answers to this test were AZAAAA, TGAAPA, and ULAAZE. Instead, it gave me this nonsense.

"can" is doing a lot of work

I only somewhat understand how Game Genie codes are formatted, and still have to actively go out of my way to be this wrong. Without being deterred, I decided to have it hack Dragon Warrior 1. This game is unique because it has case sensitive text. Most NES games don’t bother with casing, and that COULD make it harder for a newbie to hack. Most people will use a custom utility and negate the issue entirely. ChatGPT can’t do that, so it’ll need to manually edit the hexadecimal contained within the ROM file.

Generally speaking, the NES draws text on screen through the use of graphical elements. There is no way to generate text on the NES beyond this. There’s an internal table that lays out these elements in 256 8x8 pixel tiles. These tiles are all assigned a byte value, and those byte values can be used to write whatever you want. There is no ASCII here. The 256 tile table is game dependent, and byte values are often different between games due to where tiles containing letters are located on the table. Understand all of that? It’s extremely simple for someone with an understanding of this to create what’s called a text table. Simply open the game in an emulator, look at the PPU viewer, find the letter tiles, write down the values. Even simpler, using Windhex32’s “relative search” function, you can create a table in five seconds with the option for instant, simple modification of any text you find.

After uploading a Dragon Warrior ROM into ChatGPT, It took me thirty minutes to walk it through this process via prompting. We’re already off to some kind of start. Not a good one, but a start nonetheless. ChatGPT had trouble with case sensitivity, and would “find” text that consisted of complete nonsense. After some goading, it found a string. “NiNTENDO”. Unfortunately, even after directly telling it to change the bytes that write "NINTENDO" into "CHATGPT", it failed. It wrote "CHATGPT" to an address several tiles to the left of the start of both text strings.

HATGPT

This is the first and only time ChatGPT impressed me, and it still fucked up. Credit where it’s due. After experimenting with specific text strings, I made it rewrite the in-game text en masse. I explicitly forbade it from changing any control bytes. What are control bytes, you ask? Control bytes are used to format the text, as well as indicate when it begins and ends. ChatGPT ignored my command and overwrote most of the control bytes in the game. Now, if a text box shows anything at all, it’ll be garbled numbers, random sprites, and insipid quotes scrolling for minutes before suddenly ending. Sometimes, there’s a YES/NO prompt at the end of it. The fun part is trying not to choose the one that traps you by dumping the entire script in one go. This hack is a monstrosity.

it's begging me to kill it

This is Dragon Warrior: ChatGPT Edition. May God forgive me for my transgression. As far as I can tell, this is the first NES ROM Hack to be created with a chatbot. I don't consider it my work. ChatGPT filtered my prompts of all intent and created this itself. The process was a complete nightmare. It’s impressive that someone can do this, but there's no way OpenAI intended this. They don’t believe in their product. They wouldn’t need to hype it like Nigel West Dickens if they did. This hack is a completely unintentional reflection of how I feel about LLMs.

Don't even try to make ROM hacks with ChatGPT. You can’t just upload a ROM and tell it to change the levels in Mario. You really need to know what you’re doing. Kind of like using a screwdriver to flip bacon. You can, but why? You need direct knowledge of where everything is to get anything done. Even then, the title screen makes it clear that giving it explicit direction isn't enough. If it can fuck something up, it will go out of its way to do so.

OpenAI claims that ChatGPT usage can boost your productivity. In practice, this is complete bullshit. Nothing about my time with ChatGPT was productive. This is a prototype at best. It can't do anything of merit. Regardless of that, humans are a major problem, and will never use this responsibly no matter what form it takes. Too many lazy people exist, and will use it to rob themselves of their own voice, scam people, validate horrible ideas, or worse. The more indefensibly bad things about ChatGPT and technology like it are obvious, and have been covered ad nauseam. Environmental damage, stolen copyrighted material, corporate attempts to replace employees with it. These all need to be stopped immediately. Copyrighted material should only be stolen by people, not robots. The worst thing is that CEOs, shareholders, and other useless people believe they can use this for something of value. That'd be hilarious if it didn't mean people trying to make a living weren't put at risk.

Supposing those problems were solved, it would no longer be the same tool, rendering its existence meaningless. As it stands, it's little more than a validation machine that can't even do basic math. If you're unable to make the distinction between what it says and objective reality, you could end up being lost in it. Whatever you do, never forget that it doesn't think, it doesn't understand, and it doesn't care about you.

I know I sound like a 4th grade teacher going on a tirade about calculators being "the devil". Those teachers would have been right if they were talking about a calculator that’s wrong 75% of the time.